Let’s figure out a way to start signing RubyGems

Digital signatures are a passion of mine (as is infosec in general). Signatures are an integral part of my cryptosystem Keyspace for which I wrote the red25519 gem. The red25519 gem’s sole purpose was to expose the state-of-the-art Ed25519 digital signature algorithm in Ruby. I have since moved on to implementing Ed25519 in the much more comprehensive RbNaCl gem. Point being, I have longed for a modern, secure digital signature system in Ruby and have been working hard to make that a reality.

Digital signatures are something I think about almost every single day, and that’s probably fairly unusual for a Rubyist. That said, if you do work with Ruby, you have hopefully been informed that RubyGems.org was compromised and that the integrity of all gems it hosted is now in question. Because of this, RubyGems.org is down. As someone who thinks about digital signatures every day, I have certainly thought quite a bit about how digital signatures could’ve helped this situation.

And then I talk to people… not just one person, but several people, who make statements like “signing gems wouldn’t have helped here”.

These people are wrong. They are so wrong. They are so so very wrong. They are so so very very wrong I can’t put it in words, so have a double facepalm:

These people are so wrong I’m not even going to bother talking about how wrong they are. Suffice it to say that I think about digital signatures every day, and people making this claim probably don’t (if you do, I’d love to hear from you), but I can also provide an analogy about what they’re saying:

The lock which secures the door of your home can be picked. It doesn’t matter how expensive a lock you buy, someone can pick it. You can buy a top-of-the-line Medeco lock. It doesn’t matter. Just go to Defcon, and watch some of the top lockpickers in the world open 10 Medeco locks in a row. Because locks are pickable, they are pointless, therefore we shouldn’t put any locks on our doors at all.

Does that sound insane to you? I accept the truth that locks are easily pickable, but I certainly want locks on anything and everything I own. Putting locks on things you want to secure is common sense, and part of a strategy known as defense in depth. If someone tells you not to put a lock on your door just because locks are pickable, they’re not really a trustworthy source of security advice. We should start signing gems with the hope that signing gems gains enough traction for the process to be useful to everyone, not give up in despair that signing gems is a fruitless endeavor. If you disagree, I wonder how willingly you’d part with the easily pickable deadbolt on your front door.

I can’t offer a perfect system with unpickable locks, but I think we can practically deploy a system which requires few if any changes to RubyGems, and I also have suggestions about things we can do to advance the state-of-the-art in RubyGems security. So rather than trying to micro-analyze the arguments of people who say digital signatures don’t work (which, IMO, are flimsy at best), let’s just jump into my plan and let me tell you how they could work.

Understanding the Problems #

I became passionate about signing RubyGems about a year ago. I even had a plan. It was a plan I wasn’t sure was entirely a good idea at the time, but now I certainly think it’s a good idea. I talked with several people, including RubyGems maintainers like Eric Hodel about it, and I can’t say he was thrilled about my idea but he thought it was at least good enough not to tell me no. Now that the RubyGems.org hack has happened, I think it’s time to revisit the idea.

First, let’s talk about what we’d like to accomplish by signing gems. Let’s start with a hypothetical (but not really!) scenario: let’s say you want to add some gems to a Ruby app, and RubyGems.org has been compromised (you know, like what just happened). Let’s say RubyGems.org has been compromised, and someone has uploaded a malicious version of some obscure Rails dependency like “hike”. You probably aren’t thinking to look at the source code of the “hike” gem, are you? In fact you probably have no idea what the hike gem is (that’s okay, before today neither did I).

Let’s say someone has done all this, and RubyGems.org doesn’t even know this has happened yet. Yet you’re doing “bundle update rails”, possibly in response to the very security vulnerabilities which lead RubyGems.org to be hacked in the first place. After doing this, bundler has just downloaded the compromised version of the “hike” gem, and you are completely unaware.

You now deploy your app with the compromised “hike” gem (along with anyone else who has upgraded Rails during the window in which RubyGems.org has been compromised) and now your app contains a malicious payload.

Does a malicious payload scare you? It should. Do you even know what power gems have over your system? Even gem installation gives a malicious gem maker wide-ranging control over your system, because gemspecs take either the form of arbitrary Ruby code or YAML files which are known to have a code execution vulnerability.

Need some concrete examples? Check out some of the gems Benjamin Smith wrote last year. Here’s a gem that will hijack the sudo command, steal your password, then use it to create a new administrative user, enable SSH, and notify a Heroku app of the compromise. And here’s another gem he made which uses a native extension to copy your entire app’s source code into the public directory then notifies a Heroku app so an attacker can download it. Malicious gems have wide-ranging powers and can do some really scary stuff!

Whose fault is it your app is now running a malicious payload? Is it RubyGems fault for getting hacked in the first place? Or is it your fault for putting code into production without auditing it first?

Here’s my conjecture: RubyGems is going to get hacked. I mean, it already did. We should just anticipate that’s going to happen and design the actual RubyGems software in such a way that it doesn’t matter if RubyGems gets hacked, we can detect modified code and prevent it from ever being loaded within our applications. This isn’t quite what RubyGems provides today with its signature system (I sure wish RubyGems would verify gems very, very first thing before it does anything else!) but it’s close, and it’s a goal we should work towards.

There’s a more general concept around the idea that a site like RubyGems, which stores a valuable resource like Ruby libraries, can get hacked but an attacker cannot confuse you into loading malicious code, because we have cryptosystems in place to detect and reject forgeries. That idea is the Principle of Least Authority. Simply speaking the principle of least authority says that to build secure systems, we must give each part as little power as possible. I think it is unwise to rely on RubyGems to deliver us untainted gems. That’s not to say those guys aren’t doing a great job, it’s just that it’s inevitable that they will get hacked (as has been demonstrated empirically).

A dream system, built around digital signatures, should ensure that there’s no way someone could forge gems (obligatory RubyForge joke here) for any particular project without compromising that project’s private key. Unfortunately RubyGems does not presently support project-level signatures. That’s something I’ll talk about later. But first, let’s talk about what RubyGems already has, and how that is already useful to the immediate situation.

RubyGems supports a signature system which relies on RSA encryption of a SHA1 hash, which is more or less RSAENC(privkey, SHA1(gem)). This isn’t a “proper” digital signature algorithm but is fairly similar to systems seen in Tahoe: The Least Authority Filesystem and SSH. RubyGems can maintain a certificate registry and check if all gems are signed, and prevent the system from starting in the event there are unsigned gems.

It’s close to what’s needed, and would provide quite a bit even in its current state. For example, let’s say RubyGems had digital signature support and also has trusted offsite backups of their database that they know aren’t compromised. They’re able to restore a backup of their database, and from that restore their own trust model of who owns what gems. Just in case they could ask everyone to reset their password and upload their public keys. Perhaps we could keep track of people whose public keys changed during the reset process and flag them for further scrutiny, just in case an attacker was trying to compromise the system via this whole system-reset process.

Once this has happened, RubyGems could then verify gems against the public keys of their owners. This would allow RubyGems to automatically verify many gems, and quarantine those which can’t be checked against the owners’ certificates. This is relatively easy-to-automate and could’ve gotten RubyGems back online with a limited set of cryptographically verifiable gems in much less time than it’s taken to date.

This is all well and good, but what happens if an attacker manages to forge a certificate which RubyGems accepts as legitimate? RubyGems needs some kind of trust root to authenticate certificates against. If it had such a trust root, it could compare things like email address on RubyGems.org accounts (which it could double check via an email confirmation) against the one listed in the certificate to at least ensure a given certificate was valid for a given email address. It could also look at a timestamp and ensure that timestamp was significantly prior to a given attack. But to do any of that it would need a trust root which isn’t RubyGems itself.

The Identity Problem: Solving Zooko’s Triangle #

Who can RubyGems trust if not RubyGems? Is there a way to build a distributed trust system where there is no central authority? Can we rely on a web-of-trust model instead?

The answer is: kind of in theory, probably not in practice. We could allow everyone who wants to publish a gem to sign it with their private key. Their private key could include their name and cryptographic proof that they at least claim that’s their name. Let’s call this person Alice.

Is it a name we know? Perhaps it is! Perhaps we met Alice at a conference. Perhaps we thought she was really cool and trustworthy. Awesome! A name we know. But is Alice really Alice? Or is “Alice” actually a malicious Sybil (false identity, a.k.a. sock puppet) pretending to be the real Alice?

We don’t know, but perhaps we can Google around for Alice and attempt to find her information on the intarwebs. Hey, here’s her blog, her Twitter, etc. all with her name and recent activity that seems plausible.

If we really want to be certain Alice’s public key is authentic, now we need to contact her. You’ve found her Twitter, so perhaps you can tweet at her “Hey Alice, is this your key fingerprint?” Alice may respond yes, but perhaps the attacker has stolen her phone and thus compromised Alice’s Twitter account. (Silly Alice, should’ve used full disk encryption on your phone ;)

To really do our due diligence, perhaps we can try to authenticate Alice through multiple channels. Her Twitter, her email, her Github, etc. But it sure would be annoying to Alice if everyone who ever wanted to use Alice’s gems had to pester Alice across so many channels just to make sure Alice’s signature belongs to the real Alice.

It sure would be nice if we could centralize this identity verification process, and have a cryptographically verifiable certificate which states (until revoked, see below) that Alice really is Alice and this really is her private key, and this really is her email address, and this really is her Github, and so on.

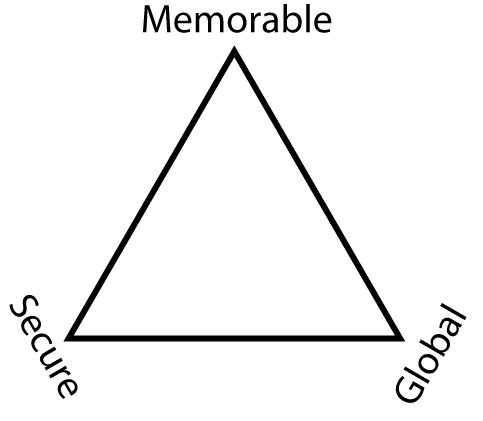

Is there any option but centralization in this case? Not really… we’ve run afoul of Zooko’s triangle:

Modeling identity is a hard problem. We can attempt to tie identities to easily memorable, low-entropy things like names or email addresses, but doing that securely is rather difficult as I hope I’ve just illustrated. We could also attempt to tie identities to hard-to-remember things like large numbers resulting from irreversible transformations of other large random numbers, such that computing their inverse mathematically is an intractable problem such as computing a discrete logarithm (or at least it’s a hard problem until quantum cryptography happens).

If we choose to identify people by random-looking numbers, we don’t need to have any type of trust root. Per the diagram of Zooko’s triangle above, random-looking numbers can be used as the basis of a “global” system where we have no central trust authority.

But there’s a problem: random-looking numbers give us no information about whether or not we trust a particular person who holds a particular public key. We know absolutely nothing from random-looking numbers. So we’re back to chasing down the person whose name appears in a certificate and asking them if we really have the right number.

To really have a practical system where we can centralize and automate the process of tying people’s random-looking numbers to the memorable parts of their identity like names and email addresses, we can’t have a global system. We need a centralized one. We need someone whom we can trust to delegate this authority to, who can be diligent about at least attempting to verify people’s identities and issue signed certificates which are not easily obtainable and cannot be actively attacked due to manual portions of a process and also human-level scrutiny of the inputs.

This is the basic idea of a certificate authority. In general the idea has probably lost favor in recent years, with the HTTPS CA system fragmenting into an incomprehensibly complex quagmire of trust relationships. But I’m not proposing anything nearly that complex.

I propose someone steps up and runs some kind of certificate authority/trust authority for RubyGems. You may be thinking that RubyGems itself is best equipped to do this sort of thing, but I think there would be value in having some 3rd party specifically interested in security responsible for this endeavor. At the very least, as a different organization running what is hopefully a different codebase on a different infrastructure, there’s some defense in depth as far as not having all your eggs in one basket. Separating the trust authority from the gem monger makes for two targets instead of one.

I’m not saying this CA should be run like practically any other CA in existence. It should be run by volunteers and provided free-of-charge. It should be unobtrusive, relying on fragments of people’s online identities to piece together their personhood and gauge whether or not they should be issued a certificate. It should be noob-friendly, and not require that people are particularly well-known or have a large body of published software, but should still perform due diligence in ascertaining someone’s identity.

I don’t think any system which relies on unauthenticated third party certificates can provide any reasonable kind of security. I think, at the very least, a CA is needed to impede the progress of the malicious.

PKI sucks! Ruby security is unfixable! #

Yes, PKI sucks. There’s all sorts of things that can go wrong with a CA. People can hack the CA and steal their private key! If this happens, you probably shouldn’t be running a CA. If a CA relies on a manual review process, people can also social engineer the CA to obtain certificates to be used maliciously.

This is particularly easy if the CA doesn’t require much in the way of identity verification (which is required if you’re trying to be noob-friendly). Perhaps they’ve already compromised the trust vectors a CA like this would use to review certificates.

These are smaller issues compared to the elephant in the room: If I were to run a CA, you’d have to trust me. Do you trust me? I talk a lot of shit on Twitter. If you judge me solely by that, you probably don’t trust me. But would you trust me to run a CA?

All that said, I’m trying to write security software in Ruby, and I feel like thanks to all the recent security turmoil, Ruby has probably gained a pretty bad reputation security-wise. Worse, it’s not just Ruby’s reputation that’s at stake, these are real problems and they need to be fixed before Ruby can provide a secure platform for trusted software.

I want to write security software in Ruby. I do not feel doing this is a good idea if Ruby’s security story is a joke. So I want to help fix the problem. I feel some type of reasonable trust root is needed to make this system work, and I can vouch for my own paranoia when it comes to detecting malicious behavior. I’d be happy to attempt to run a CA on behalf of the Ruby community, and potentially others, or I’d be happy to help design open source software that can be used for this purpose but administered by an independent body like RubyCentral.

In a Dream World: The Real Fix #

I spend way too much time thinking about cryptographic security models. In doing so, I always want to use the latest, greatest tools for the job. In that regard, RubyGems’ homebrew signature algorithm falls down. So do DSA and ECDSA, as they’re both vulnerable to entropy failure as was illustrated a few days ago when reused nonces were discovered signing BitCoin transactions. The real fix would involve a modern signature algorithm like Ed25519, which would necessitate a tool beyond RubyGems itself (which, by design, relies only on the Ruby standard library).

As I mentioned earlier: I think project-specific certificates are the way to go. I don’t think it would be particularly hard to create a CA-like system that issues certificates to individual projects or people who maintain gems, but a certificate that signs the name of the gem, and is in turn signed by a trusted root.

If I were really to try to run a CA for RubyGems, I would probably try to run it at the project level, and try to have a nontrivial burden of proof that you are the actual owner of a project, including things like OAuth to your Github account and confirming your identity over multiple channels as I described earlier. I’d probably not rely on the existing RubyGems infrastructure at all but ship something separate that could ensure gems are loaded securely, using state-of-the-art cryptographic tools like RbNaCl, libsodium, and Ed25519.

I feel like the gems themselves should be the focus of authority, and the current RubyGems certificate system places way too much trust in individual users and does not provide a gem-centric authority system.

The whole goal of this is what I described earlier: a least authority system where the entire RubyGems ecosystem could be contaminated and RubyGems.org could be serving nothing but malware and the client-side tool could detect the tampering and prevent the gems from even being loaded.

If you’re interested in this sort of system, hit me up on Twitter (I’m @bascule).

The Challenges Moving Forward #

So I’m probably not going to make the dream RubyGems CA system described above. What can we do in the meantime? I think someone needs to step up and create some kind of a CA system for gems, even if that someone is RubyGems.org themselves.

You can bypass the entire identity verification process and automatically issue signed certificates upon request. Doing so is ripe for abuse by active attackers, and brings up another important aspect of designing a certificate system like this: revocation.

Let’s say the CA has issued someone a certificate, and later discovered they were duped and they have just issued a certificate to Satan instead of Alice. Oops! The CA now needs to communicate the fact that what they said about Alice before, yeah whoops, that’s totally wrong, turns out Alice is Satan. My bad!

Whatever model is applied to secure gems must support certificate revocation. If whatever modicum of a trust model a certificate authority that auto-issues certificates to logged in users (or at best, an un unobtrusive volunteer-run CA) can provide is violated, which it surely will be, there should be some way to inform people authenticating certificates that there’s malicious certs out there that shouldn’t be trusted.

Whatever CA we were to trust needs to maintain a blacklist of revoked certificates, preferably one which can be authenticated with the CA’s public key. This is an essential component of any trust model with a central authority, and one, as far as I can tell, has not exactly been codified into the existing RubyGems software and its signature system.

Now What? #

This is a problem I’d love to help solve. I don’t know the best solution. Perhaps we place all our trust in RubyGems, or perhaps we set up some other trust authority that maintains certificates. I think we can all agree whatever solution comes about should be fully open source and easily audited by anyone. Kerckhoffs wouldn’t have it any other way.

As the post title implies, I don’t have any definitive answers, but I think we need something to the tune of a CA to solve this problem, and for everyone to digitally sign their gems using certificates which in turn are signed by some central trust authority So Say We All (until said authority turns out to be malicious, at which point we abandon them for Better Trust Authority, and on and on ad infinitum)

Building a trust model around how we ship software in the Ruby world is a hard problem, but it’s not an unsolvable one, and I think any good solutions will still work even in the wake of a total compromise of RubyGems.org.

In the words of That Mitchell and Webb Look: come on boffins, let’s get this sorted!

(Edit: If you are interested in this idea, please take a look at https://github.com/rubygems-trust. Also check out the #rubygems-trust IRC channel on Freenode)