The Death of Bitcoin

The road of innovation is paved with the corpses of outmoded technologies. Throughout history there are countless examples of a technology presumed to be the “next big thing” starting to gain traction, only to find itself outmoded in the light of a technology that can solve a problem better.

Will this happen to Bitcoin? I don’t know. Unfortunately I don’t have a crystal ball which can tell me that. However, I think it’s an interesting thing to speculate about. Bitcoin is described by enthusiasts as potentially being bigger than the Internet itself (a claim I can’t seem to understand, considering that Bitcoin is an Internet-powered technology), but what if the opposite happened, and Bitcoin wound up being as popular as Betamax cassettes or Napster in the grand scheme of things?

I don’t want to be entirely grim, despite this post’s title. This isn’t intended to be some “Bitcoin is dying”-style FUD piece. I think Bitcoin is actually succeeding just fine for now (although the hype may be out of proportion with its actual success). Where I think Bitcoin has problems, hopefully I can give some constructive advice on how Bitcoin developers can endeavor to improve the technology before an up-and-coming Cryptocurrency 2.0 replaces it.

Before I talk about Bitcoin, I would like to introduce you to some historical examples of when entire paths of technological development were completely abandoned, and draw some general conclusions about the circuitous paths innovation can take so I can discuss Bitcoin in the context of these approaches.

Incremental refinement: The Newcomen and Watt steam engines #

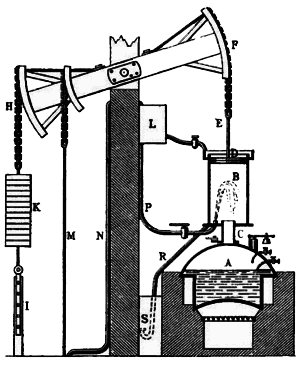

The steam engine was the workhorse of the Industrial Revolution. If you associate a name with the steam engine, it’s probably that of James Watt, however hundreds of steam engines were hard at work pumping water out of British mines some fifty years before the Watt steam engine. These devices were Newcomen steam engines.

Watt’s steam engine came about when James Watt was working on repairing a Newcomen engine and had an important insight: the Newcomen engine was monumentally inefficient because the cylinder had to be heated and cooled repeatedly, wasting energy. Watt’s innovation was to add a separate condenser chamber to Newcomen’s existing design. This doubled the efficiency of these engines and, with the time wasted heating and cooling the cylinder eliminated, allowed them to run considerably faster. Instead of being relegated to the pumping of water, these engines were now poised to power things like factories and locomotives.

This can be likened to existing “altcoin” development. Many developers are forking Bitcoin and attempting to refine its design, by experimenting with different proof-of-work functions, increasing the rate at which blocks are calculated, or adding completely new innovative features such as cryptographic privacy through zero knowledge proofs as seen in Zerocoin (which I sure hope isn’t broken!). Others, such as the Lightning Network, are working on decentralizing the blockchain itself.

Thus far though, none of these changes have proved particularly compelling (except perhaps the addition of a lovable meme mascot), and Bitcoin adoption exceeds all altcoins by many orders of magnitude. Nevertheless, it remains to be seen if some fundamental addition to the existing Bitcoin protocol contains the key to unlocking more widespread adoption.

Technological revolution: the Edison DC electrical system versus Tesla’s polyphase AC system #

Edison is credited with the invention of the incandescent light bulb, although a great deal of credit should be given to Lewis Latimer for his work on long-lasting carbon filaments, without which Edison’s bulbs would not have a useful lifespan (another example of incremental refinement as a force multiplier). With an electrical device capable of replacing dangerous gas lamps at his disposal, Edison set out to electrify the world.

There was just one problem: Edison’s system was based on direct current, which didn’t scale well. DC electrical systems could not send electricity over long distances, and it seemed that in order to scale DC electricity production, lots and lots of highly distributed generation facilities would be needed with short distances between these facilities and the devices using electricity.

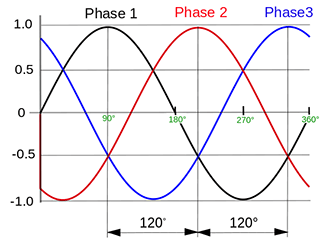

Enter Nikola Tesla and the polyphase AC system. The polyphase system is yet another example of incremental refinement: Tesla did not invent or discover alternating current, but did conceive of and design devices which brought about massive efficiency gains over Edison’s DC-based system through the use of multiple phases. The rest is history: AC scaled where DC did not, and the polyphase AC electrical system continues to be the primary means of distributing electrical power over long distances. Edison, despite having the first mover advantage, lost the “war of the currents” because his system was technologically inferior. Polyphase AC was truly capable of operating at “world scale” and now, if only indirectly, touches the lives of practically every human on earth.

Towards a faster, more efficient cryptocurrency #

You might notice a persistent theme of the examples I’ve given has been efficiency. One of the biggest drivers for one technology replacing another is if a new technology is massively more efficient than the incumbent it’s replacing.

This is a question that’s likely evoked in the mind of many who have seen pictures of large-scale Bitcoin mining operations. The sight of thousands upon thousands of computers cranking away at solving a cryptographic proof-of-work function has lead many to question whether the same resources could somehow be applied to something useful, for example searching for a cure for cancer with those spare CPU (or these days, ASIC) cycles. Given I’m a technologist with an extensive background in cryptography, I don’t think it’s practical to have a cryptocurrency with a proof-of-work function that can cure cancer, but I too question whether there is a way to eliminate the need for a proof-of-work entirely, and if the proof-of-work were eliminated without sacrificing the system’s security, if it would make for a more practical cryptocurrency.

Bitcoin uses a cryptographic proof-of-work to solve a number of problems, such as the so-called Sybil attack where an attacker can exploit cheap creation of a particular role in a network to gain control of a network, and also to ensure that by using a “consensus-by-lottery” mechanism, the nodes which accept and republish completed transactions are selected effectively at random, so if there are enough mutually suspicious parties participating in the network, no one group can decide which transactions are or aren’t allowed to go through.

Bitcoin tunes the work factor such that the network produces new blocks around 10 minute intervals. This is suitable for replacing asynchronous money transfers such as the Automated Clearing House (ACH) system used to move money between banks today, but is unsuitable for things like retail payments, where a retailer wants to synchronously confirm a payment is approved before letting a customer leave with their purchase. If a retailer were to let a customer leave before confirming receipt of a payment, they would be opening themselves up to fraud, which is particularly problematic with Bitcoin as it lacks the traditional “chargeback” mechanisms to deal with fraud retroactively (Note: the common advice from the Bitcoin community here seems to be let the customer walk out the door and eat the fraud in the event it happens. I guess they’re assuming fraud doesn’t exist?)

There’s nothing particularly magical about a 10 minute interval. It’s simply what Satoshi chose, and it’s an area many altcoins have experimented with accelerating. With a coordinated effort, I believe it would be possible to move Bitcoin itself towards faster block generation, which in turn would help alleviate the ballooning size of a Bitcoin block, which the core developers of Bitcoin are planning on upping the limit to a whopping 20MB per block soon.

However, if the requirement for a proof-of-work were eliminated entirely, the system would not just be massively more efficient (in terms of e.g. eliminating mining costs in the form of hardware and electricity), but could also come to consensus much faster. Instead of a 10 minute window (or longer, depending on if the winning miner accepts your transaction into the blockchain), a cryptocurrency without a proof-of-work function could potentially come to consensus and process transactions at a rate comparable with the existing credit card system. This would unlock the potential to practically use cryptocurrency in retail scenarios where merchants depend on quick answers to whether or not a payment was accepted successfully.

There are many potential “Bitcoin killer” technologies working on solving this sort of proof-of-work-free distributed consensus problem. I’m not sure any of them are actually ready to replace Bitcoin today. However, at least in my opinion this is not an intractable problem. The problems of “Sybilproofing” the system and presenting selective denial-of-service attacks remain and require creative solutions. Fortunately, people are experimenting with such solutions.

There are at least three systems working on this approach today which I find particularly compelling:

Stellar’s SCP: more than just a cryptocurrency, Stellar is an internet-scale distributed consensus protocol (known as “Stellar Consensus Protocol” or SCP, not to be confused with “secure copy”) developed using formal methods and carrying a proof-of-correctness. Distributed systems are a notoriously tricky problem, and with perhaps the notable exception of Bitcoin generally all distributed consensus protocols (e.g. Paxos, Raft) are developed using formal methods and carry similar proofs. SCP relies on nodes being configured with a “trusted” set of gateways to defeat Sybil attacks. It remains to be seen how well this will work in practice.

Hyperledger is a distributed transaction ledger based on an algorithm called Practical Byzantine Fault Tolerance (PBFT) which is formally proven to be correct. Hyperledger specifically takes the viewpoint that it is not a cryptocurrency, but instead a consensus protocol for keeping a decentralized transaction ledger that can be denominated in the currency of your choice.

Tendermint is a distributed transaction ledger based on the formally proven DLS algorithm (however Tendermint itself does not carry a similar formal proof). To prevent Sybil attacks, Tendermint uses a “proof of stake” where new verifiers must “buy in” to the network and participate honestly for a probationary period or else they lose the bond they bought into the network with. It remains to be seen if Tendermint is sound from a distributed systems perspective, and if the “proof of stake” approach can prevent Sybil attacks in practice.

The list goes on. There’s also Nxt, Ethereum’s Slasher protocol, and Peercoin’s Duplicate Stake Protocol among others.

I’m not saying that Stellar, Hyperledger, Tendermint, etc are “Bitcoin killers”. That remains to be seen. However, they are demonstrative of alternative solutions to the general problem of cryptocurrency which not only are faster and more efficient, but developed with formal methods and are proven correct. These technologies meet my personal rubric as an enthusiast of both cryptography and distributed systems for what I would personally like to see out of a next generation cryptocurrency.

It’s worth noting that unlike Stellar/SCP, the PBFT algorithm Hyperledger is based on, or the DLS algorithm that Tendermint is based on, Bitcoin has no formal proof of correctness. To the contrary, it’s been proved that Bitcoin is broken from a theoretical perspective in that it fails to solve the Byzantine agreement problem and it’s been demonstrated in practice (and is generally known, at least among experts) that Bitcoin breaks in the event of a network partition, or in Bitcoin jargon, a “blockchain fork”. In the event of such a fork, one branch will win and one branch will lose, and transactions in the losing fork are completely lost.

Losing accepted writes (or in Bitcoin’s case, “transactions”) is a property that is generally looked down upon in distributed systems. Usually distributed systems try to be highly available by sacrificing consistency, or sacrifice availability to maintain consistency (AP and CP in the CAP theorem, respectively). In the event of a prolonged network partition, the Bitcoin block chain forks, and when the partition is healed all of the transactions in the losing fork are lost.

I don’t think this makes a lot of sense for a digital currency. Goods could’ve been exchanged, and assumed to be paid for, and after the network partition is healed merchants suddenly discover the Bitcoins they thought they had have just vanished without a trace.

A Bitcoin crypto-meltdown #

Being replaced by a superior technology isn’t the only thing that could destroy Bitcoin. Perhaps a catastrophic failure of Bitcoin’s underlying cryptography could cause a failure of the entire system, or at least, people’s confidence therein. As my background is primarily in cryptography, and I have been particularly interested in elliptic curve cryptography (ECC), I find this a particularly fun thing to speculate about. It’s also interesting to note that the Bitcoin Wiki has practically nothing to say on this topic, providing a short one sentence defense of Bitcoin’s cryptography:

SHA-256 and ECDSA are considered very strong currently, but they might be broken in the far future.

Bitcoin uses an algorithm called the Elliptic Curve Digital Signature Algorithm (ECDSA) to implement “wallets”. In Bitcoin, each “wallet” has an ECDSA keypair, with a private key allowing you to move money and a public key that can be used to provide a recipient address. The intent to move funds from wallet-to-wallet is certified by using ECDSA to sign an intended transaction. This is what gets published to miners for eventual insertion into the Bitcoin blockchain.

ECDSA has been heavily criticized by cryptographers like Dan Bernstein for being brittle and error-prone, and bad implementations of ECDSA have leaked Bitcoin private keys in practice. Because of this, authors of Bitcoin clients should probably implement deterministic signature schemes such as the scheme described in RFC 6979, and unless they do, there is ever-present potential that the private keys to wallets might be inadvertently leaked.

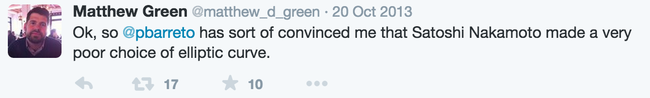

Unfortunately, there is a “cute”-but-hard-to-detect attack possible in backdoored implementations of secp256k1 implementing RFC 6979 (this is what Matt Green was referring to in the above tweet):

…there are random bitflips in the multiply used to derive R from K, because the twist of secp256k1 is a smooth field where solving the DLP is relatively easy a single bit-flip in the multiply can result in a R value from which K can be recovered in about 251 work.

Even when trying to guard against reuse or biases in the nonce (a.k.a. “R value”) by using RFC 6979 deterministic signatures, all that’s needed for a backdoor that leaks private keys is a single bit flip in the multiply function.

Implementations of secp256k1 were vulnerable to sidechannel attacks where a malicious piece of software running on the same hardware could recover Bitcoin private keys by making observations of cache hits/misses. The “Just A Little Bit” and “Just A Little Bit More” attacks used a flush+reload side channel attack to recover private keys from the ECDSA implementation used in OpenSSL.

The secp256k1 C library available on the Bitcoin GitHub Organization is theoretically not vulnerable to these attacks, however their approach to avoiding them presently relies on manual verification of the assembly generated by C compilers, a brittle approach at best.

How can Bitcoin avoid these sorts of attacks in perpetuity?

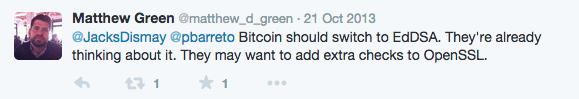

EdDSA is a next-generation digital signature algorithm developed by a team including Dan Bernstein and Tanja Lange. It uses a new type of elliptic curve called a twisted Edwards curve, namely Ed25519, a sort of made-for-signatures counterpart curve to the increasingly popular Curve25519, which was just selected by the IRTF’s CFRG to be the next generation elliptic curve used in TLS. Ed25519 is already supported by OpenSSH and GPG.

Does any of this add up to a crypto meltdown? No, at least, not today. But the digital signature algorithm and elliptic curve used by Bitcoin does not inspire confidence, and in my opinion leaves way too much wiggle room for hard-to-discover backdoors to possibly be inserted into popular clients.

Conclusion #

Bitcoin proved that a cryptocurrency is possible. However, the solution Bitcoin provided is quite literally a “brute force” solution, and one that does not elegantly tolerate network partitions. This approach made Bitcoin simple and easy to reason about for what it is, and possibly the only distributed consensus algorithm without a formal proof of correctness which has seen widespread adoption.

But Bitcoin is slow to come to consensus, monumentally inefficient, and loses data in the event of prolonged partitions between peers in the network. These partitions can take many forms, such as loss of network connectivity or incompatibilities between software versions.

Bitcoin is an interesting hack when it comes to a cryptocurrency, but a lot of

the excitement (or pejoratively, hype) around Bitcoin today seems to be around extending the cryptocurrency into a sort of general decentralized database and consensus system. There’s talk of moving practically anything you can think of: digital identity, DNS, instant messaging, even entire governments into the “blockchain”. One startup that has seen a baffling amount of investment wants to put Bitcoin mining ASICs into every device up to and including toasters sees Bitcoin as “a fundamental system resource on par with CPU, bandwidth, hard drive space, and RAM”.

I don’t think Bitcoin is the correct technology to build these sorts of ideas on. I understand and strongly sympathize with the desire to move to decentralized systems and plan on eventually working in that space myself, but between Bitcoin’s efficiency problems and poor tolerance of network partitions, I do not think it’s suitable as a general purpose global decentralized database in the way people want to use it.

The next generation of decentralized consensus protocols seems much better suited to this task. In the words of Fred Brooks, the question “is not whether to build a pilot system and throw it away. You will do that. […] Hence plan to throw one away; you will, anyhow.”